AI in Training Simulators: Where It Actually Adds Value

Everyone Is Talking About AI in Training

AI is everywhere

AI is everywhere right now, especially in learning and development.

But if you look closely at most implementations, they fall into one of two buckets: surface-level features that don't change outcomes, or ideas that sound compelling in a demo but are only truly operational in highly specialized environments.

Think flight simulators. Military combat training. Industries with decades of investment and near-unlimited budgets for scenario complexity.

Those are real. And they're impressive. But they're also a long way from where most organizations are and an even longer way from what most training programs actually need.

The more useful question isn't "what's AI doing in the most advanced simulators in the world?"

It's "where does AI actually add value for the training programs most companies are running today?"

That's what we're exploring and what we're increasingly being asked about by the organizations we work with.

The Starting Point That Gets Overlooked

There's a quiet assumption in a lot of AI conversations that technology will fix training.

It won't.

If training is hard to access, inconsistent across teams, or built on static one-size-fits-all content, adding AI doesn't solve those problems. It layers complexity on top of them.

Before AI can add meaningful value, the training environment itself has to work. It needs to be scalable, accessible, and, critically, capture consistent, reliable data about how people are actually performing.

That last part matters more than most people realize.

Because the foundation of anything useful in AI-assisted training isn't the AI itself. It's the data underneath it.

What Good Training Data Already Tells You

Before we even get to AI, there's significant value in what well-instrumented simulators already capture.

When someone works through a training simulation, the data tells a story:

How long did they take on each step?

Where did they hesitate or make errors?

What did they get right the first time versus after multiple attempts?

How did their performance shift from practice modules to quiz or assessment mode?

Did they improve over repeated sessions, and how quickly?

This is meaningful performance data. Not just "did they complete the course", but a real picture of where someone struggled, where they're confident, and whether they're actually improving.

Most organizations aren't fully using what they already have. And this is exactly where a conversation about AI should start. Because AI is only as useful as the data it's working with.

Where AI Fits Into That Picture

Once you have reliable performance data coming out of a simulator, AI starts to become genuinely interesting. Here's where we see the most realistic value:

Surfacing Insights From Performance Data

Data is powerful

Raw data is useful. Interpreted data is powerful.

AI can help identify patterns that would take a human analyst hours to find, which steps consistently trip people up, which learner profiles tend to struggle in similar ways, where the training itself might need to be adjusted.

For L&D teams managing training across large or distributed workforces, that kind of analysis at scale is hard to do manually. AI makes it more tractable.

Chatbot-Style Support During or After Training

One of the more practical near-term applications we're looking at is conversational support, a chatbot interface that learners can interact with during or after a simulation.

Not replacing the simulation itself. But available when someone is stuck, needs clarification, or wants to understand why they got something wrong.

This is less about cutting-edge AI and more about making support accessible at the moment it's needed and without requiring a trainer or supervisor to be present.

Adaptive Learning - Training That Responds to the Individual

Measuring Training

Most training treats everyone the same, regardless of experience level. A seasoned technician sits through the same steps as someone in their first week.

AI has the potential to change this by adjusting difficulty based on demonstrated skill, moving faster for experienced users, and adding reinforcement when someone is clearly struggling.

This is more complex to implement well. But the performance data that simulators already capture is exactly what makes it possible. The data tells you where someone is. AI decides what they need next.

Moving From Completion Metrics to Competency Signals

This is where AI could have the highest long-term impact.

Right now, most organizations measure training success by completion rates. That metric tells you almost nothing about readiness.

With detailed simulation data, decision-making patterns, error frequency, and improvement rates across sessions. AI can start to build a more honest picture of whether someone is actually prepared to perform, not just whether they finished the course.

That shift, from measuring activity to measuring competency, is where the real value lives.

What the Most Advanced Simulators Are Doing

It's worth being clear-eyed about the broader landscape.

Flight training, military simulation, and surgical training have been integrating AI for years. Dynamic scenario generation, real-time adaptive difficulty, AI-driven opponents, and environmental variables. The results are genuinely impressive.

How is AI being used in today advanced simulators?

But those systems represent enormous investment, highly specialized development, and use cases where the cost of failure is catastrophic. They've earned the complexity.

For most training programs, onboarding, compliance, equipment operation, and safety procedures, the path to AI value looks different. It's less about building a dynamic AI-driven world and more about using AI to understand performance, personalize the experience, and give learners better feedback.

The principles are the same. The implementation scale is very different.

The Questions Worth Asking

If you're evaluating where AI fits in your training program, these are the questions we think matter most:

Does your simulator capture meaningful performance data? If not, that's the starting point - not AI.

What are you currently doing with that data? Most organizations have more signals than they're acting on.

What problem are you actually trying to solve? Better learner support? Faster competency development? Smarter reporting for managers? The answer shapes which AI applications are worth pursuing.

What's the cost of complexity? Not every AI feature justifies the implementation burden. Simpler solutions, like better data reporting or chatbot support, often deliver more practical value than sophisticated adaptive systems.

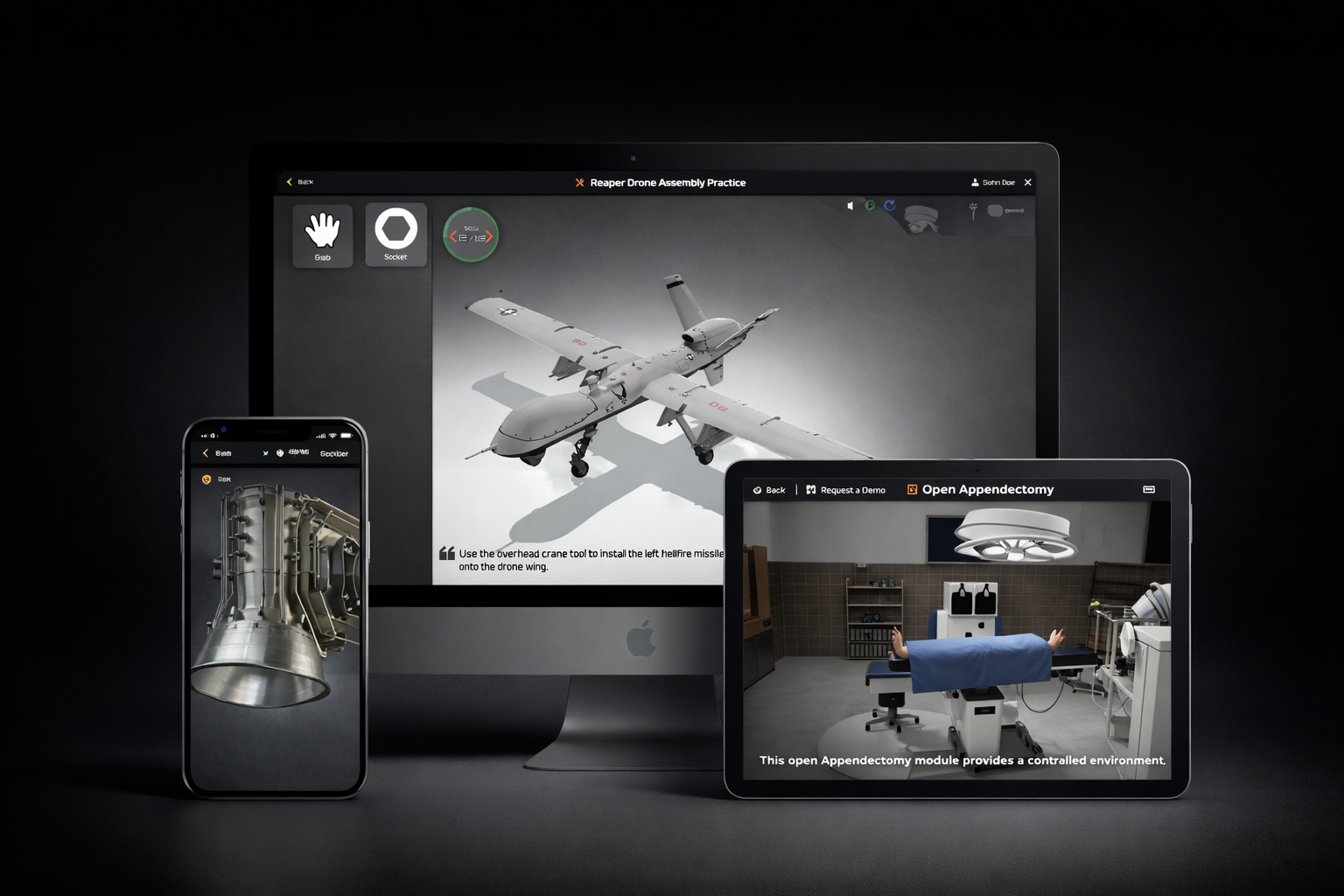

Train Anytime - Anywhere - Any Device

Where We Are

We're actively exploring how AI can be integrated into simulation-based training in ways that improve real outcomes, not just add a feature that looks good in a demo.

That includes how conversational tools can support learners, how performance data from our simulators can be used more intelligently, and how reporting can move from tracking activity to surfacing genuine competency signals.

We don't think AI is a magic layer you add on top of training. We think it's a set of tools that, used in the right places, can make training more relevant, more personalized, and more honest about whether it's actually working.

If that's a conversation you're having in your organization, we'd be glad to think through it with you.

Curious how simulation-based training can serve as the data foundation for smarter learning? Reach out to us or explore our simulators.